The rise of AI agents and autonomous systems is pushing software development paradigms to their limits. Traditional QA, with its static test suites and scheduled runs, struggles to keep pace with the fluid, context-aware nature of agentic code. Enter Just-in-Time (JIT) Testing – a paradigm shift where tests are generated, selected, and executed dynamically, precisely when and where they're needed. This isn't just about speed; it's about creating a responsive safety net for a new breed of software.

How JIT Testing Works: From Static to Dynamic

Static testing relies on a pre-defined battery of tests. JIT Testing flips this model:

- Trigger & Analyze: Code changes, user interactions, or an agent's decision trigger the JIT system. It analyzes the context – what changed, what dependencies are affected, and the agent's current goal.

- Intelligent Test Selection: Instead of running everything, an AI-powered selector picks the most relevant unit, integration, and even generates new scenario-based tests on the fly.

- On-Demand Execution: Tests execute in a containerized, ephemeral environment, providing immediate feedback to the developer or the agent itself.

- Feedback Loop: Results are fed back into the system to improve future test selection and generation.

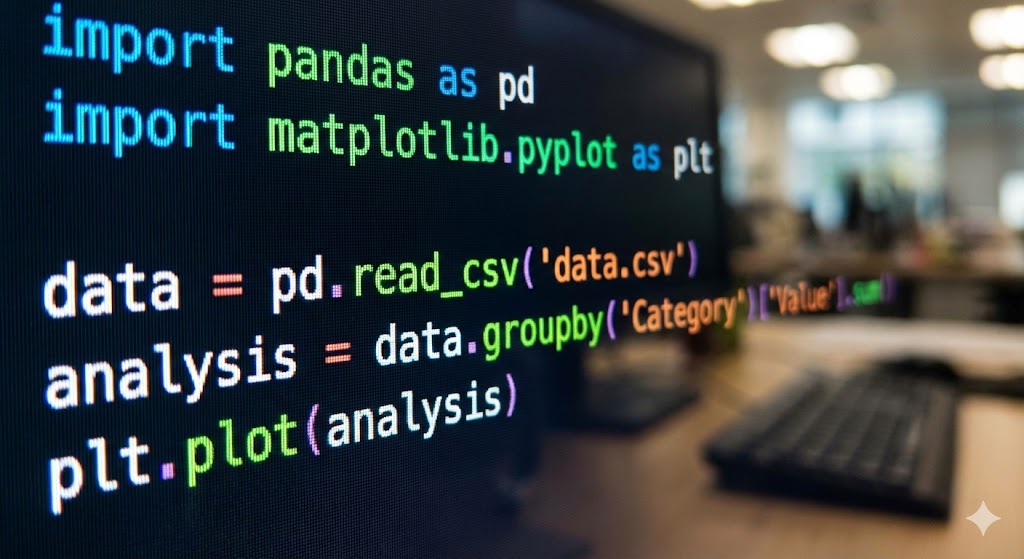

# Conceptual Pseudo-code for a JIT Test Trigger

class JITTestOrchestrator:

def on_code_change(self, commit_context, agent_state=None):

"""Triggered by a commit or agent action."""

# 1. Analyze impact

affected_modules = self.analyze_impact(commit_context.diff)

# 2. Select relevant tests intelligently

selected_tests = self.ai_selector.predict_tests(

modules=affected_modules,

agent_goal=agent_state["current_goal"] if agent_state else None

)

# 3. Generate new tests for novel scenarios

if agent_state and agent_state["is_novel_action"]:

generated_tests = self.test_generator.for_scenario(agent_state["scenario"])

selected_tests.extend(generated_tests)

# 4. Execute on-demand

test_results = self.execute_in_isolation(selected_tests)

# 5. Provide feedback

self.notify(commit_context.author, test_results)

if agent_state:

agent_state["last_test_pass"] = test_results.all_passed

return test_results

JIT vs. Traditional QA: A Practical Comparison

| Aspect | Traditional QA | Just-in-Time (JIT) Testing |

|---|---|---|

| Execution Cadence | Scheduled (CI/CD pipelines) | Event-driven, on-demand |

| Test Suite | Static, pre-defined | Dynamic, context-aware selection & generation |

| Feedback Speed | Minutes to hours post-commit | Seconds, often pre-commit or mid-agent task |

| Resource Usage | High (runs full suites) | Optimized (runs only what's necessary) |

| Ideal For | Stable features, monolithic apps | Microservices, AI agents, rapid prototyping |

Limitations and Considerations:

- Initial Setup Complexity: Requires robust test analysis AI, a comprehensive test corpus, and fast container orchestration.

- Over-reliance on AI: The selector's accuracy is critical; false negatives (missing critical tests) can be costly.

- Not a Silver Bullet: JIT testing complements, but doesn't replace, broader integration, security, and performance testing cycles.

JIT Testing represents the natural evolution of QA for an agentic era. By providing immediate, context-sensitive validation, it allows developers and AI agents to move faster with confidence, reducing the feedback loop from hours to moments. It turns QA from a gatekeeper into an integrated, intelligent companion in the development flow.

JIT Testing represents the natural evolution of QA for an agentic era. By providing immediate, context-sensitive validation, it allows developers and AI agents to move faster with confidence, reducing the feedback loop from hours to moments. It turns QA from a gatekeeper into an integrated, intelligent companion in the development flow.

Next Steps for Your Team:

- Start by instrumenting your codebase to understand change impact better.

- Experiment with AI-powered test selection tools for your existing suite.

- Consider how dynamic test generation could validate the unique decision paths of any autonomous systems you're building.

This approach is gaining traction as teams tackle the QA challenges of AI-driven development, as discussed in this analysis on GPU optimization strategies for LLM inference, where efficient resource use is equally paramount. For a deeper dive into the future of software validation, you can explore more trends in our coverage of modern testing methodologies.

This insight is based on analysis of current industry shifts towards more dynamic development practices. You can read about a concrete implementation of innovative, context-aware engineering in this Facebook Engineering article on cross-device authentication.